GNUstep StepTalk is Alive and Well

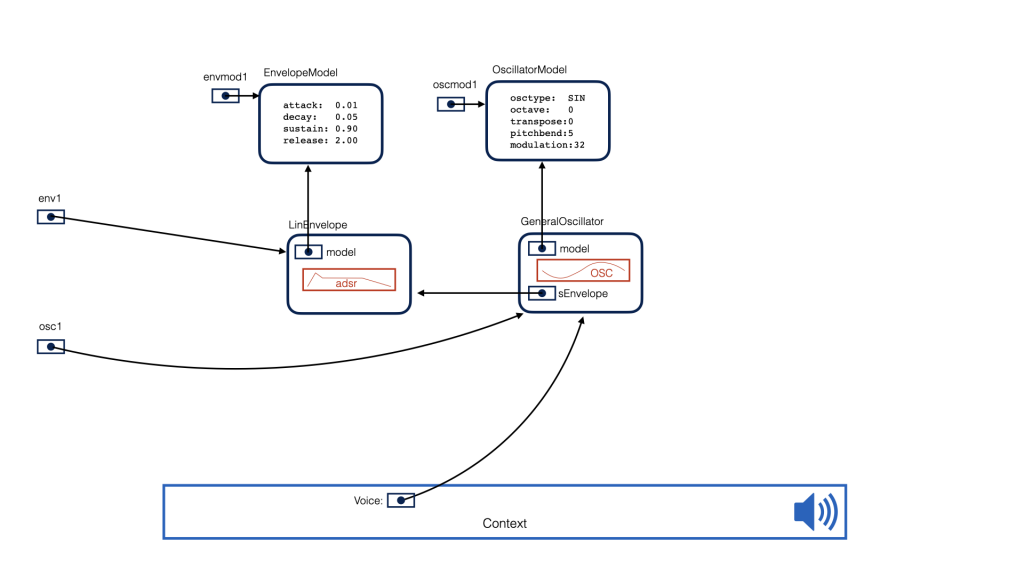

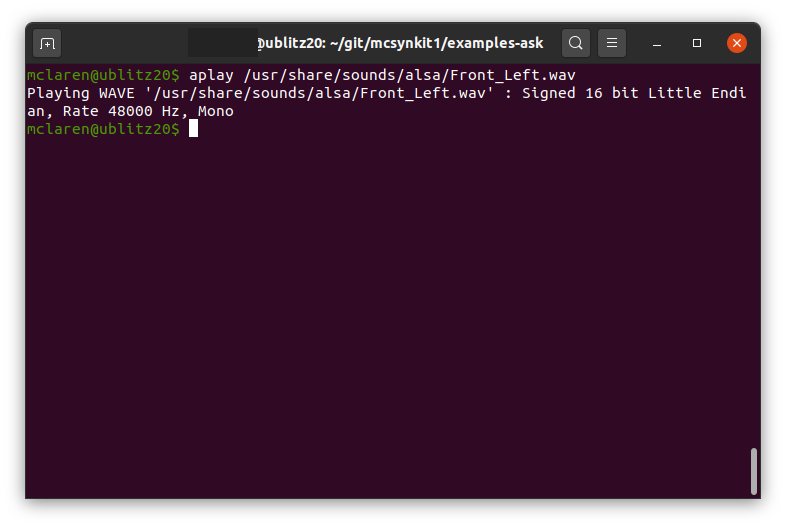

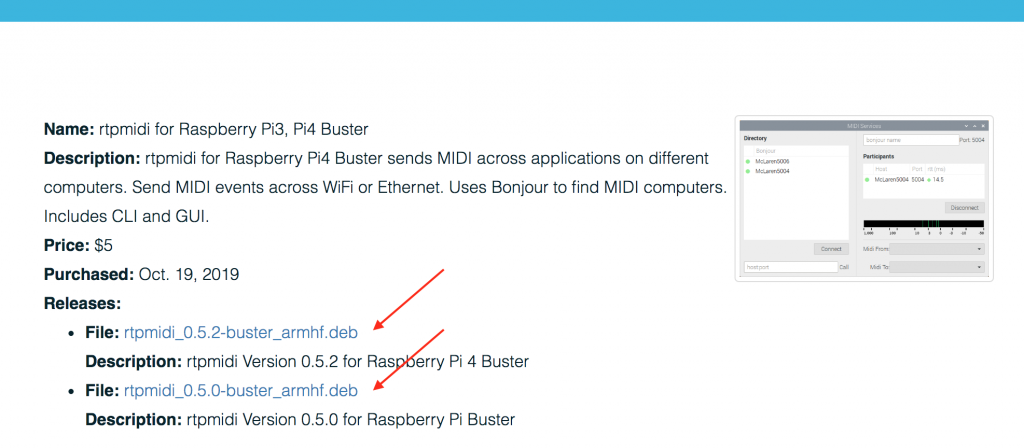

A few years ago, I explored the idea of writing a small interpreter for embedding into McLaren Labs applications. The concept was to extend rtpmidi or a synth application with a scriptable ability to customize controls, behaviors or sound graphs. This would help make each application very slim, but to allow extension through scripting. (This is not a very novel idea: it’s been done many times before. 🙂 )

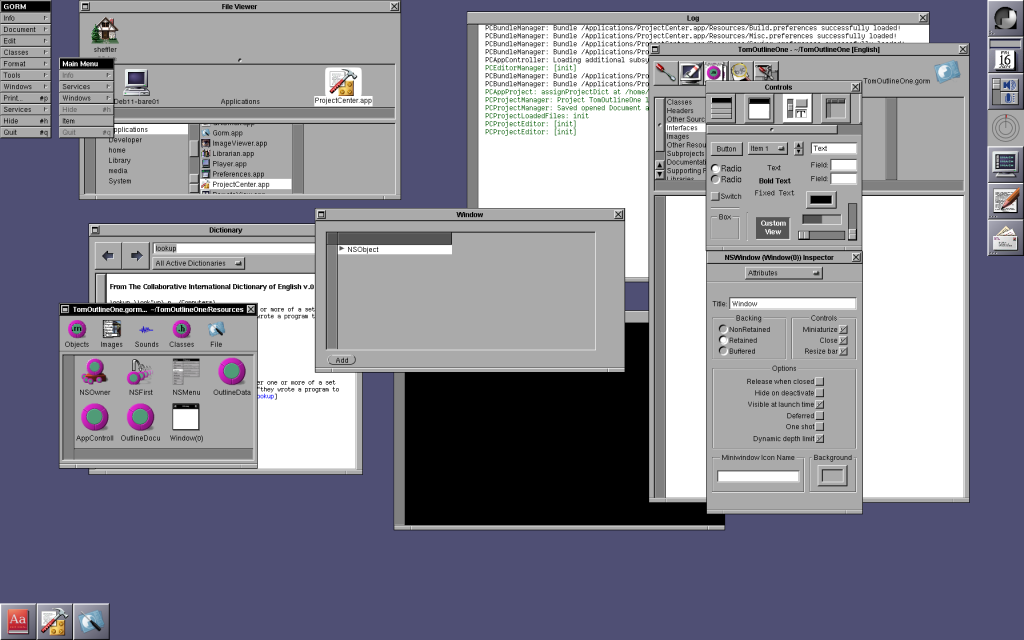

The Objective-C execution environment (libobjc) provides many hooks that make it fairly easy to call into the ObjC runtime from an interpreter, or even to bridge Objc method calls back to an interpreter. The idea of a scritping environment to accompany a GNUstep ObjC Appplication program seemed promising and I wrote a little interpreter (based on PostScript syntax) to test out some of the ideas.

Along the way, I learned about a much more mature project in libs-steptalk. Among other things, it provides a SmallTalk-like language for extending applications or even gluing them together in the GNUstep workspace. It seemed very old, but there were some tremendous ideas in there: I wondered if I could learn enough about it to use it, and I wondered if it still worked.

(Spoiler Alert! I did and it does.)

Read More »GNUstep StepTalk is Alive and Well